This write-up is an insight into cloud workloads. It addresses questions such as What are they? What does a workload mean in the cloud computing space? How are they classified? How many types of workloads are there? and so on.

So, without further ado.

Let’s jump right in.

1. What is a Workload in the Cloud?

Simply put, an application or a service deployed on the cloud is a workload. The service could be a massive one comprising hundreds of microservices working in conjunction or a modest solo service.

A workload is a resource running on the cloud consuming compute and possibly storage. There are different types of it, I’ll come to that.

The term workload signifies abstraction and portability. When a service is called a workload, it means it can be moved across different cloud platforms or from on-prem to the cloud or vice-versa without any dependencies and fuss.

Cloud container technology is a great enabler in moving workloads across platforms without breaking things.

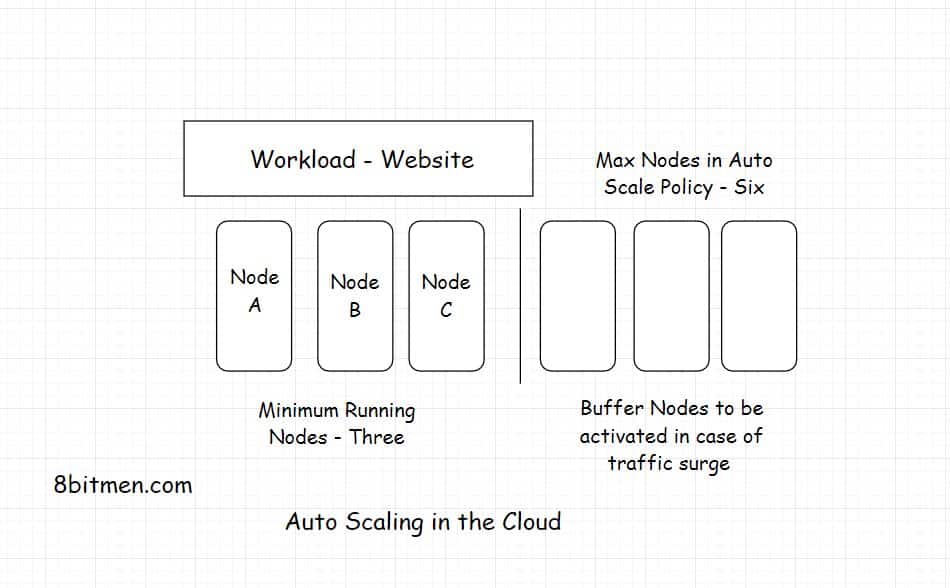

The below diagram shows a workload deployed on the cloud run by multiple machines.

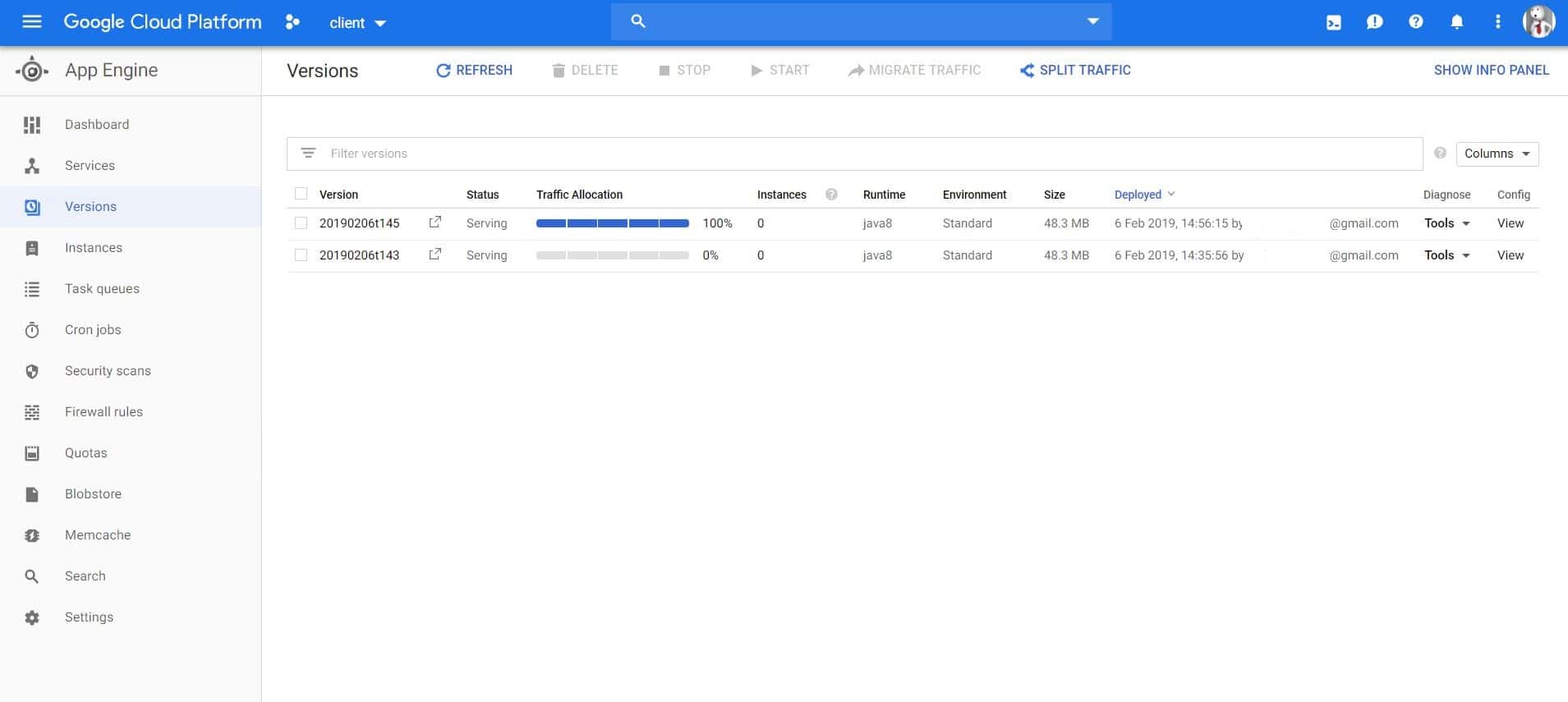

Here is a snapshot of the workload of an online browser-based game I built, deployed on Google Cloud.

As you see in the image above, there are various versions of the workload been deployed on the cloud. Every time a workload is deployed a new version of it is created.

Having different versions of the same workload helps in A/B testing. We can switch between different versions, shut down the instances of one and spin up the instances of others based on our needs.

Now let’s move on to different types of workloads.

2. What are the Different Types of Workloads in the Cloud?

Workloads can be classified into several different categories based on their architecture, resource requirements, resource consumption patterns, user traffic patterns, etc.

I’ll begin with the ones classified by resource requirements.

Classified by Resource Requirements

General Compute

Workloads requiring general compute power are typically web applications, distributed data stores, containerized microservices etc. They do not have any specific computational requirements and are easily run leveraging the default capacity of the cloud instances.

CPU Intensive

These workloads have high computational requirements. These are typically deep learning applications, highly scalable multiplayer gaming apps built to handle quite a number of concurrent users, running big data analytics, 3D modeling, video encoding, etc.

Memory Intensive

Memory-intensive workloads need quite a bit of CPU memory to execute tasks. These are typically distributed databases, caches, real-time streaming data analytics etc.

GPU Accelerated Computation

Workloads such as seismic analysis, computational fluid dynamics, autonomous vehicles data processing, speech recognition require the power of GPUs along with the CPUs to run the accelerated tasks.

Storage Optimized Database Workloads

These workloads are primarily highly scalable NoSQL databases, in-memory databases, data warehouses etc.

These were the workloads classified by the resource requirements. Now let’s have a look into the workloads classified by user traffic patterns.

Classified by User Traffic Patterns

Static Workloads

These are the workloads where the resource utilization is pretty known, there are no surprises, no traffic spikes and such.

These kinds of workloads can be a utility deployed on the cloud used by a limited number of users in a private network, for instance, an organization-wide tax-calculation utility or the knowledge base on something.

Periodic Workloads

These workloads have utilization at specific times, maybe a few days in a month like an electricity bill payment app and so on.

Serverless compute suits best for these kinds of applications, where there is no need to pay for idle instances just pay for the compute utilized.

Unpredictable workloads

These workloads include popular apps like social networks, online multiplayer games, video, game streaming apps etc.

In these workloads, traffic can spike by any amount exponentially. For instance, Pokemon Go right after launch grew upto 50x the anticipated traffic.

In these scenarios, the auto-scaling ability of the cloud saves the day by dynamically adding additional instances to the fleet as and when required.

Hybrid Workloads

These workloads are a hybrid of the above-stated workloads. For instance, a hybrid workload may require a mix of infrastructure capabilities such as GPU power along with high instance memory with unpredictable traffic.

Well, there is no limit to the architectural complexity in online services. We will talk about this in another article. For now, this is pretty much it. If you liked the article, do share it with your network.

Mastering the Fundamentals of the Cloud

If you wish to master the fundamentals of cloud computing. Check out my platform-agnostic Cloud Computing 101 course. It is part of the Zero to Mastering Software Architecture learning track, a series of three courses I have written intending to educate you, step by step, on the domain of software architecture and distributed system design. The learning track takes you right from having no knowledge in it to making you a pro in designing large-scale distributed systems like YouTube, Netflix, Hotstar, and more.

I’ll see you in the next write-up.

Until then.

Cheers!

Shivang

Related posts

Zero to Mastering Software Architecture Learning Path - Starting from Zero to Designing Web-Scale Distributed Applications Like a Pro. Check it out.

Master system design for your interviews. Check out this blog post written by me.

Zero to Mastering Software Architecture is a learning path authored by me comprising a series of three courses for software developers, aspiring architects, product managers/owners, engineering managers, IT consultants and anyone looking to get a firm grasp on software architecture, application deployment infrastructure and distributed systems design starting right from zero. Check it out.

Recent Posts

- System Design Case Study #5: In-Memory Storage & In-Memory Databases – Storing Application Data In-Memory To Achieve Sub-Second Response Latency

- System Design Case Study #4: How WalkMe Engineering Scaled their Stateful Service Leveraging Pub-Sub Mechanism

- Why Stack Overflow Picked Svelte for their Overflow AI Feature And the Website UI

- A Discussion on Stateless & Stateful Services (Managing User State on the Backend)

- System Design Case Study #3: How Discord Scaled Their Member Update Feature Benchmarking Different Data Structures

CodeCrafters lets you build tools like Redis, Docker, Git and more from the bare bones. With their hands-on courses, you not only gain an in-depth understanding of distributed systems and advanced system design concepts but can also compare your project with the community and then finally navigate the official source code to see how it’s done.

Get 40% off with this link. (Affiliate)

Follow Me On Social Media